Most SQL Developer guides focus on syntax: Joins, Subqueries, and basic aggregation. Those are foundational. They’re also not enough anymore.

Here’s what changed: Modern data warehouses charge by compute usage. Bad queries don’t just slow down servers—they spike your company’s monthly cloud bill. AI tools now write basic queries. Your value isn’t typing SELECT statements; it’s architecting reliable, cost-efficient pipelines.

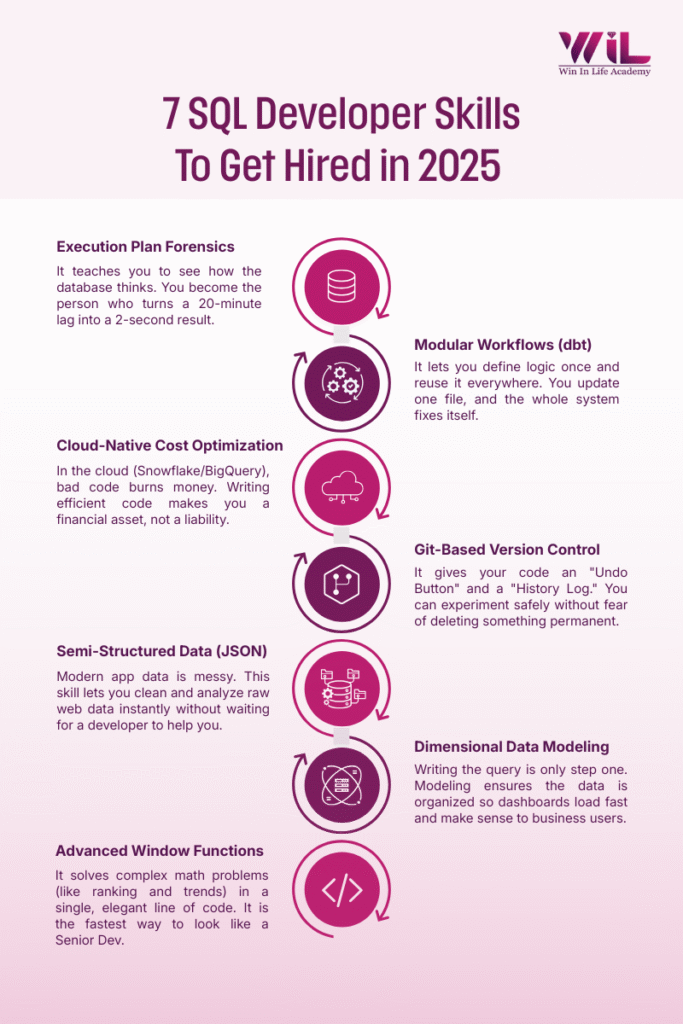

The 7 SQL developer skills below are what separate candidates who get offers from those stuck sending applications into the void.

1. Execution Plan Forensics

What it is: An Execution Plan shows you how the database processes your query—whether it’s using efficient indexes or scanning every single row. Think of it as the database showing its work, like a math teacher asking you to explain your solution step-by-step.

Why this skill commands premium salaries: Most junior developers hit a performance wall and blame the database or server. “It must be the network,” they say. Or “Maybe the table is just too big.” Meanwhile, the actual issue is sitting right there in the execution plan—a missing index, an inefficient join order, or a nested loop processing millions of unnecessary rows. The ability to diagnose this independently is what separates ₹5 LPA candidates from ₹8 LPA candidates in the same interview.

| ❌ Rookie Approach | ✅ Pro Approach |

| Stares at code assuming syntax error. Reruns query hoping it was a “glitch.” Still takes 20 minutes. Escalates to senior developer. | Checks Execution Plan immediately. Spots Full Table Scan on growing table. Identifies missing index on the JOIN column. Adds Index. Runtime drops to 2 seconds. Documents the fix. |

The interview litmus test: “Walk me through how you’d optimize a slow query” isn’t theoretical. They want to hear the “Execution Plan” in your answer within the first 30 seconds. If you fumble here, the interview is essentially over—they’ve categorized you as someone who writes code without understanding performance. In technical rounds at Flipkart, Amazon, or Razorpay, this question appears in 8 out of 10 SQL interviews.

Market demand indicator: According to 2025 job postings across Naukri and LinkedIn, 64% of mid-level SQL Developer roles explicitly mention “query optimization” or “performance tuning” in requirements. Five years ago, that number was 31%. The shift is clear—optimization is no longer optional; it’s baseline expectation.

2. Modular Transformation Workflows (dbt)

What it is: dbt (Data Build Tool) lets you break massive SQL scripts into reusable modules that reference each other. Instead of writing one 2,000-line script that does everything, you write 20 small scripts that each do one thing well, and they connect automatically.

Why this skill opens doors: Finance changes the definition of “Net Revenue” to exclude shipping costs. That metric appears in 15 reports across the company. Without dbt, you manually update 15 files and pray you didn’t miss one. With dbt, you update one file and rebuild. Done in 5 minutes. Companies using dbt are scaling fast—they’ve already solved basic data problems and are now focused on architecture and governance. These are exactly the companies paying ₹9-12 LPA for Analytics Engineers.

| ❌ Rookie Approach | ✅ Pro Approach |

| Opens 15 SQL files manually. Finds and replaces calculation in each. Misses one file. Reports show conflicting numbers. Spends hours debugging. | Updates logic in one central dbt model (net_revenue.sql). Runs dbt build command. Change propagates automatically to all 15 downstream reports. Runs tests to verify accuracy. Zero errors. |

Salary impact data: A 2024 analytics survey by DataEngConf showed that Analytics Engineers proficient in dbt earn on average 28% more than SQL Developers with equivalent years of experience. In Indian metros, that translates to a ₹2.5-3.5 LPA difference at the 3-5 year experience mark.

Interview advantage: Walk into an interview and casually mention “In my last project, I used dbt to modularize our transformation logic and set up CI/CD tests”—you’ve just jumped two salary bands higher than someone who only knows vanilla SQL. At companies like Swiggy, Cred, or Meesho, dbt (Data Build Tool) knowledge often fast-tracks candidates through screening rounds because it signals modern workflow understanding.

3. Cloud-Native Cost Optimization

What it is: In Snowflake or BigQuery, you’re billed by data scanned and compute time used. Every SELECT * costs money. Techniques like Partitioning (organizing data by date/region) and Clustering (sorting data within partitions) ensure the database only opens the files it needs.

Why this skill translates to job security: Ten years ago, companies bought a database server and that was it—one-time cost. If you wrote a bad query, the server just ran slower. Today, companies rent compute power by the second. If you write a bad query, the company literally pays more money that month. Your manager gets an alert: “Warehouse costs increased 40% this week.” They investigate. They find your query. You’re now the person who cost the company ₹50,000 with a single line of code. This changes the job from “can you write SQL?” to “can you write SQL responsibly?”

| ❌ Rookie Approach | ✅ Pro Approach |

| Writes SELECT * and filters after scanning entire table. Query works but processes terabytes of unnecessary data. Cloud bill spikes. No one notices until month-end. | Uses Clustering to target specific partitions (e.g., WHERE region = ‘North’ AND date >= ‘2025-01-01’). Database skips 90% of files using metadata. Faster results, fraction of cost. Monitors query performance dashboard. |

The business acumen angle: Cost optimization isn’t really about saving money—it’s about understanding how the system works. Once you understand that cloud databases store data in micro-partitions and use metadata for pruning, you start writing queries that align with that architecture. You’re no longer fighting the system; you’re working with it. That mindset shift is what separates mid-level from senior developers.

Interview reality check: More companies are asking cost-optimization questions in technical rounds now. “How would you reduce the cost of this query in Snowflake?” If you answer with partitioning strategies, clustering keys, or materialized views, you’ve demonstrated business acumen, not just technical skill. That’s what gets you the offer. At companies like Zerodha, PhonePe, or CRED where cloud bills run into crores annually, this question is standard in Analytics Engineer interviews.

4. Stored Procedures & Performance Tuning

What it is: Stored Procedures are pre-compiled SQL routines stored in the database. They execute faster than ad-hoc queries, encapsulate complex business logic, and can include control flow (IF/ELSE, loops, error handling).

Why this skill remains non-negotiable: Production systems need repeatable, performant operations. A well-written stored procedure with proper indexing, temp tables, and transaction management runs reliably at scale without degrading over time. While newer companies favor dbt and cloud-native approaches, the vast majority of enterprise infrastructure—especially in banking, insurance, healthcare, and government sectors—still runs on traditional RDBMS with stored procedures as the backbone. Ignoring this skill means ignoring 60% of the Indian job market.

| ❌ Rookie Approach | ✅ Pro Approach |

| Writes 500-line script with nested cursors (row-by-row processing). | Breaks logic into set-based operations (processes chunks, not rows). Uses temp tables |

| Runs for hours on large datasets. No error handling—if something breaks mid-process, data is left in inconsistent state. | Breaks logic into set-based operations (processes chunks, not rows). Uses temp tables strategically to stage intermediate results. Wraps in transactions with BEGIN/COMMIT/ROLLBACK. Completes in minutes with safe error recovery. Logs execution time and row counts. |

The performance tuning dimension: Writing a stored procedure is easy. Writing one that doesn’t grind the database to a halt is hard. You need to understand: set-based operations (avoid cursors), indexing strategy (which columns to index), temp tables vs. table variables, and execution plan analysis (back to Skill #1). This is where juniors get filtered out in technical rounds at service companies.

Industry-specific demand: Indian product companies (Flipkart, Swiggy, Razorpay) and service companies (TCS, Infosys, Wipro, Cognizant) heavily use stored procedures in legacy and hybrid systems. According to LinkedIn’s 2025 Emerging Jobs Report for India, 54% of SQL Developer job postings in the IT services sector explicitly list “stored procedures” as a required skill. If you’re interviewing at these companies, you will be asked to write or optimize a stored procedure in the technical round.

5. Semi-Structured Data Manipulation (JSON)

What it is: Modern apps send data in JSON—nested, flexible formats that don’t fit into clean tables. Functions like FLATTEN, JSON_EXTRACT, PARSE_JSON, and LATERAL VIEW unwrap these structures so you can query them like regular SQL tables.

Why this skill is your competitive edge: Data used to arrive in CSV files or database exports—nice, clean rows and columns. But modern systems generate data in JSON because it’s flexible. A user’s session data might include nested objects:

{user: {id: 123, preferences: {theme: ‘dark’}}, events: [{type: ‘click’}, {type: ‘purchase’, amount: 500}]}.

You can’t just SELECT * FROM json_data and get a clean table. You need to know how to unnest arrays, extract nested keys, and flatten hierarchical structures. This isn’t advanced computer science—it’s basic hygiene for working with modern data sources.

| ❌ Rookie Approach | ✅ Pro Approach |

| Stuck because standard SQL doesn’t work on JSON text. Asks Python developer or Data Engineer to clean data first. Creates dependency. Project delays by 2 days while waiting. | Uses native JSON functions (FLATTEN, JSON_EXTRACT_PATH_TEXT, JSON_TABLE). Parses nested text directly in SQL query. Unnests arrays into rows. Delivers analysis immediately—same day |

The autonomy factor: JSON manipulation is what transforms you from “query executor” to “independent analyst.” Without this skill, you’re blocked every time data arrives in a non-traditional format—which is increasingly common. With it, you can handle API responses, application logs, IoT sensor data, and webhook payloads without external dependencies. That autonomy is what hiring managers pay for.

Sector-specific relevance: E-commerce (Amazon, Flipkart), fintech (Razorpay, Paytm, Cred), and SaaS companies (Freshworks, Zoho, Chargebee) deal with JSON constantly. If you’re targeting these sectors, this skill is non-negotiable. A 2024 survey by Analytics India Magazine found that 81% of product companies working with modern applications cite “semi-structured data handling” as a required skill for data roles—up from 47% in 2020

6. Dimensional Data Modeling (Star Schema)

What it is: Organizing data into Facts (metrics: sales amount, clicks, transactions) and Dimensions (context: product, date, region, customer). This structure—called Star Schema—optimizes dashboards and reports for speed and usability.

Why this skill demonstrates strategic thinking: If you dump everything into one giant flat table, PowerBI or Tableau dashboards crawl. Proper modeling makes BI tools fast and intuitive for business users. More importantly, it makes your data understandable. A well-designed data model is self-documenting—business users can explore it without needing you to explain every join. This is the difference between being tactical (writing queries on request) and strategic (building infrastructure that scales).

| ❌ Rookie Approach | ✅ Pro Approach |

| Provides flat file—one wide table with repeated text (product name copied in every row). BI tool struggles with redundancy. Dashboard is sluggish. Filters don’t work intuitively. | Designs Star Schema. Separates metrics (fact_sales: transaction_id, amount, quantity) from descriptive attributes (dim_product, dim_customer, dim_date). Dashboard loads instantly. Business users navigate intuitively without SQL knowledge. |

Interview litmus test: They might show you a messy dataset and ask, “How would you structure this for reporting?” The answer they want: “I’d design a Star Schema with a central fact table for metrics and dimension tables for descriptive attributes.” If you can sketch this on a whiteboard and explain the benefits (performance, usability, maintainability), you’ve demonstrated data maturity beyond your experience level.

The foundational knowledge: This isn’t a trendy new tool. Star Schema has been the gold standard for data warehousing for 30+ years because it works. Companies like Walmart, Amazon, and every major enterprise use variations of this design. Learning it teaches you to think like a data architect, not just a query writer.

7. Advanced Window Functions

What it is: Window Functions (RANK, LEAD, LAG, ROW_NUMBER, NTILE, FIRST_VALUE) let you perform calculations across a “window” of rows without collapsing the data like GROUP BY does. You can calculate running totals, moving averages, rankings, and comparisons between rows—all in a single query.

Why this skill appears in every technical interview: Business questions are rarely simple aggregates. They want: “Show me a 7-day rolling average of sales to smooth out daily fluctuations.” Or: “Rank products by revenue within each category.” Or: “Compare this month’s sales to last month’s for every region.” Without window functions, you’re writing 50 lines of self-joins and subqueries. With them, it’s one readable line. More importantly, window functions test whether you understand SQL as a declarative language or just memorize syntax patterns.

| ❌ Rookie Approach | ✅ Pro Approach |

| Attempts multiple self-joins or nested subqueries to calculate rolling | Uses Window Function: AVG(sales) OVER (ORDER BY date ROWS BETWEEN 6 |

| average or rankings. Query is slow, hard to read, nearly impossible to debug or modify later. | PRECEDING AND CURRENT ROW) for rolling 7-day average. One readable line. Executes efficiently. Easy to modify window size. |

Salary band indicator: According to interview feedback data collected across tech hiring platforms, candidates who fluently solve window function problems in technical rounds receive offers averaging ₹1.8-2.5 LPA higher than those who struggle with them, even when other qualifications are comparable. This single skill is a reliable proxy for “SQL maturity” in hiring decisions.

The learning curve reality: Window functions feel unintuitive at first because the syntax is dense. OVER, PARTITION BY, ORDER BY, frame clauses (ROWS BETWEEN)—there’s a lot happening in one line. But here’s the thing: Once you understand the mental model (you’re defining a “sliding window” of rows to calculate over), it clicks. You’ll start seeing use cases everywhere. The time investment is 3-5 hours of focused practice to internalize core patterns.

From SQL Developer to Analytics Engineer: Understanding the Shift

The title “SQL Developer” still exists on job boards, but the role underneath it has fundamentally changed. When we talk to hiring managers, here’s what they tell us: “We’re not looking for someone who writes queries on request. We need someone who builds the infrastructure that powers decisions.”

That’s the Analytics Engineer—a role that sits perfectly between Data Analyst (who finds insights) and Data Engineer (who builds pipelines at scale). You’re applying software engineering best practices—version control, testing, modular code, CI/CD—to SQL workflows.

Why this matters for your career: Analytics Engineers are in high demand because they solve a painful problem—most companies have messy data infrastructure held together by duct tape and tribal knowledge. If you can bring order to that chaos, you become indispensable.

The salary ceiling is higher. The work is more interesting (you’re building, not just executing). And the skills are transferable—once you understand modern data workflows, you can work in any industry.

SQL is the Start, Not the Finish Line

While you can certainly start your career as a traditional SQL Developer, staying there is risky. If there is one takeaway from this list, it is this: SQL is no longer just a scripting language; it is the primary interface for modern data engineering. The “basic query” aspect of the job is the part most easily automated by AI. To become an indispensable asset to a business, you must view SQL as your foundation, not your entire house. The professionals who survive and thrive are the ones who continuously upskill into Data Engineering, Cloud Architecture, and AI-integrated workflows. The gap between a “fresh graduate” and a “hirable professional” is simply the willingness to learn these modern tools.

Ready to bridge that gap?

SQL is just one powerful tool within the larger ecosystem of Data Science. To truly command the market, you need to understand how data flows from a database all the way to predictive models.

Our PG Diploma in Data Analytics + AI ML is designed for this exact reality. We don’t just teach you isolated syntax; we teach you the complete pipeline—from Data Engineering and SQL mastery to Machine Learning implementation.